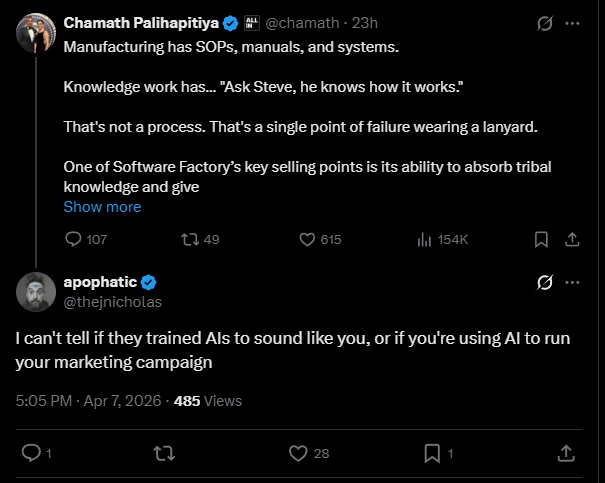

I was three scrolls into an X session I had already promised myself I wouldn’t have when Chamath Palihapitiya appeared on my screen and said something about manufacturing having SOPs and knowledge work having Steve, and Steve is a single point of failure wearing a lanyard, and I stopped scrolling because the sentence sounded like something I had heard before. Not the content. The shape. The way each paragraph did exactly one thing and then stopped and the next paragraph did one thing and then stopped and the rhythm was declaration, pause, pivot, sell. Declaration, pause, pivot, sell. The man was typing in iambic pentameter for venture capitalists and 154,000 people were watching and I was trying to figure out why a billionaire’s X post sounded like it had been generated by the same thing I use to outline my novel.

I replied. I said: “I can’t tell if they trained AIs to sound like you, or if you’re using AI to run your marketing campaign.”

Twenty-eight likes. Which on my account is a ticker-tape parade. But the reply wasn’t a joke. It was a real question I did not have the answer to, and I still don’t, and the reason I still don’t is that the answer might be that the question is broken. That “or” in the middle might be doing something it can’t actually do, which is separate two things that are no longer two things.

I have spent months staring at a robot’s (ie AI’s) output. Not using it. Staring at it. Cataloguing the tics. The way a robot builds a paragraph with every sentence structural, no word wasted, no breath taken that doesn’t serve the whole. The way it pivots from observation to implication in exactly three moves. The way it ends with something that feels like insight but functions as a closer, a resonant little button that gives you the sensation of having learned something without requiring you to verify whether you actually did. I know this fingerprint. I have it memorized. And Chamath’s post had it. Not approximately. Not in the neighborhood. The same fingerprint.

Maybe Chamath writes his own posts. Maybe he always has, and the reason the a robot sounds like a founder pitching at a board meeting is because the it was fed millions of words by founders pitching at board meetings and learned that this is what authority sounds like, this is what confidence sounds like, this modular rhythm is the sound of a person who has money telling a person who wants money how the world works. That’s one version. The other version is Chamath uses a robot to write his posts, or to polish them, or to “refine his ideas” which is the phrase people use when they mean “I typed a sentence and the machine wrote the other eleven,” and if that’s the case then the circularity is total. The model learned his voice from his corpus. He uses the model to generate new corpus. The model will retrain on the generated corpus. And the signal folds in on itself, getting smaller and tighter and more compressed until you can’t find the original crease because the original crease has been folded into the fold that was folded into the fold.

Or both are true simultaneously and the distinction has collapsed. Not “collapsed” like it fell apart. “Collapsed” like a wave function. The observation changed the state. The moment the robots learned to sound like the optimization class, the optimization class started sounding like the robots, and now neither of them is the original and both of them are the copy and the word “copy” doesn’t mean anything anymore because a copy requires an original, and the original is a founder’s X post from 2019 that got scraped into a training set that produced a model that now writes the posts that will get scraped into the next training set, and the snake has eaten enough of its own tail that the snake is now mostly tail.

A year ago, “sounds like AI” meant something specific. It meant that weird ChatGPT voice. “Certainly! Here’s a comprehensive overview.” “Let’s delve into.” “It’s important to note.” A dialect so distinct you could spot it from across a room. But that dialect is dying. Not because AI got worse at it but because it got better. The new dialect doesn’t announce itself. It doesn’t say “certainly” or “let’s delve.” It says things like “that’s a single point of failure wearing a lanyard.” It claims every point you make is “load-bearing.” It sounds like a person. It sounds like a specific kind of person. It sounds like the kind of person whose communication has been optimized for engagement since before the models existed, because those people were the training data, and the training data is the voice, and the voice is now everywhere, and the voice sounds like competence, and we are so used to associating that particular cadence with intelligence that we can’t hear it anymore, the way you stop hearing the highway when you’ve lived next to it long enough, except this highway is carrying every thought you read and half the ones you write.

I kept coming back to my own reply, and the thing that started bothering me was that I couldn’t tell if I wrote it, or if I wrote the version of it that months of talking to the robots trained me to write. My sentence had a rhythm too. It had a pivot. It had a structure that looked a lot like the structures I’ve been absorbing through the screen, and the contamination might not be one-directional, it might not be Chamath’s problem or the robot’s problem, it might be my problem, which means it’s your problem, which means the question in my reply wasn’t a question about Chamath at all.

It was a question about whether anyone is still writing from scratch or whether we’re all running on the same borrowed firmware now, all sounding the same, all pivoting at the same beat, all landing on the same resonant little button at the end of the paragraph, and the button doesn’t mean anything, and nobody notices, because the button feels like insight, and feeling like insight has replaced the need for insight to actually be there.

Leave a comment